- Most runners don't actually get faster — about half the field improved their speed-at-heart-rate over a year of training, and about half declined. The average runner essentially stayed where they started.

- Consistency beat intensity, every time — the biggest predictor of getting faster wasn't how hard runners trained, but how reliably they showed up. Month-to-month consistency outperformed every other variable tested.

- Hard sessions without volume trended backwards — runners who pushed nearly half their sessions at high intensity but ran less overall actually got slower on average. The dose-response was clear: volume and consistency drove improvement, not intensity in isolation.

Running

Data Shows Most Runners Don't Actually Get Faster

How do runners actually get faster? We analyzed 856,000 running activities to find out who actually gets faster. It wasn't the intense ones, but rather the consistent ones. Showing up month after month beat everything flashier. Boring, as it turns out, is fast.

May 7, 2026

Key takeaways

In my previous Terra Research work on marathon training, I linked training patterns to race-day performance. The findings were striking; more easy running predicted faster finishes, excessive intensity predicted slower ones. But there was always a problem underlying that analysis: a marathon is a single event, and people don't always run at their maximum. Some are pacing for a friend. Some blow up at mile 20. Some treat it as a training run. Tying months of preparation to one imperfect data point felt like it was leaving signal on the table.

As I continued to plug away on the European activity dataset I've written the previous two articles on, I realized I could ask a different question. Instead of linking training to a race result, could I use the training data itself to measure fitness change? No event required. No assumptions about effort on the day. Just the relationship between how fast someone runs and how hard their heart is working, tracked over time, across thousands of people.

The speed-HR curve is one of the oldest tools in exercise physiology. If you can run faster at the same heart rate, your aerobic engine has improved. The question was whether this approach — applied at scale to noisy, real-world wearable data from consumer devices — would actually detect anything meaningful. Or whether it would drown in noise.

The answer from 641 runners and five independent analytical approaches came back remarkably clearly.

How I Measured Aerobic Fitness Using Heart Rate

Performance is notoriously hard to measure from wearable data. I don't have VO₂max tests, lactate curves, or controlled time trials. What I do have, for every run, is speed and heart rate, and in this dataset, it's complicated further by the fact I'm working with session averages, not the granular second-by-second data.

Average speed and average heart rate hide much of what is interesting about the physiology of a session: the surges up hills, the recoveries, the drift in the final kilometers. This is the fundamental tension in big data research. You tend to have either depth or breadth; ten sensors on one athlete in a lab, or one number per session from nine thousand people in the wild. The hope is that with enough people, enough runs, and enough time, the signal buried in imperfect averages remains real.

The logic is straightforward: if you can run faster at the same heart rate, your aerobic engine has improved. The relationship between sub-maximal heart rate and aerobic fitness is well-established — Schimpchen et al. (2023), in a systematic review of 14 studies, found that most reported large associations between changes in HR at standardized sub-maximal running speeds and changes in aerobic fitness (r=0.51–0.88), though three studies found no such relationship.

Buchheit (2014) makes a similar case, comprehensively reviewing the evidence that submaximal exercise heart rate reliably tracks changes in fitness and fatigue across a range of endurance sports. What's less well-established is whether this approach works at the population scale under free-living conditions, using only the session-average data produced by a GPS watch. What I've tried to do here is apply the same principle across 641 runners simultaneously.

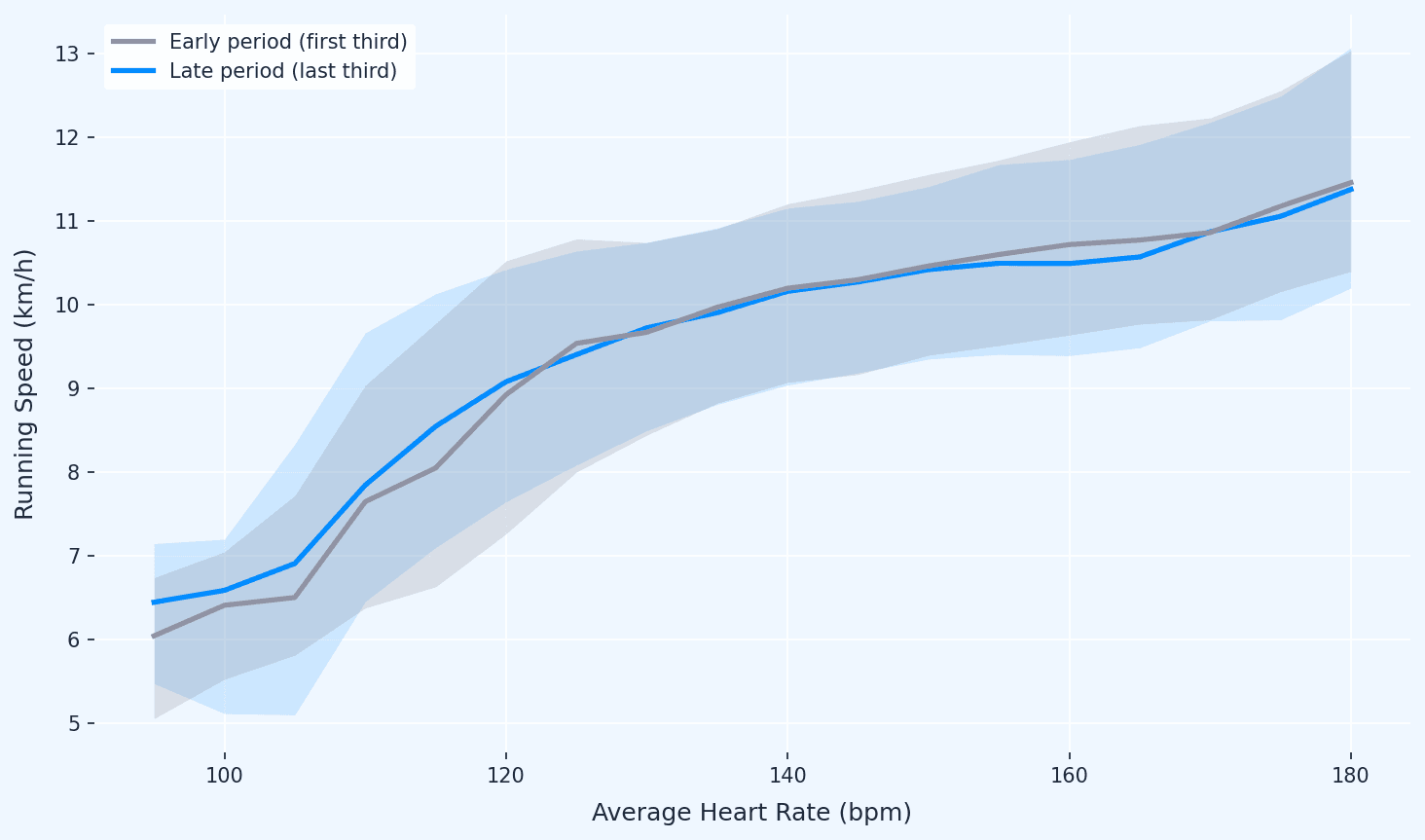

I built a speed-HR curve for each runner by binning their average heart rate into 5 bpm windows and taking the median speed in each bin. I then compared curves from their first third of recorded activities to their last third — a within-person design that controls for individual differences in genetics, device, and baseline fitness.

To qualify, a runner needed at least 20 runs spanning 6 or more months, with valid heart rate data and at least two matched HR bins between periods. That left me with 641 runners from the original 9,437-user European cohort.

There are a few assumptions worth naming honestly. First, I'm assuming runners are doing broadly similar types of runs across the observation period, but I can't tell whether someone has shifted from road to trail, or started doing significantly more hilly routes. Both would affect the HR-pace relationship independently of any fitness change.

Second, weather and season affect HR at a given pace: running in summer heat inflates heart rate relative to the same effort in winter. I initially hoped that the sheer size of the dataset, with runners joining at different times of year, might naturally average this out.

When I checked, it didn't, not fully: 50% of early periods happened to start in winter, likely reflecting a January "new year" joining pattern, so the seasonal distribution isn't random. The proper fix is a season-controlled replication, where I compare the same calendar quarters year-on-year. I ran that analysis on 419 qualifying users and the results were nearly identical. The seasonal confound isn't driving the findings.

Get the latest Terra Research reports and insights every week as soon as they're published.

By continuing, I agree to the Privacy Policy and Terms of Service.

The Findings: What Running Training Data Reveals

- Wearable data can detect aerobic fitness shifts — no lab required

By comparing matched heart rate bins within each runner across time, I can quantify whether someone is running faster at the same cardiac cost. Across 641 users with a median observation window of 3.5 years, the method produces stable, interpretable estimates.

This matters commercially: any product wanting to show users their fitness is changing can do it with speed and heart rate alone.

- At population level, average improvement is close to zero — and that's the point

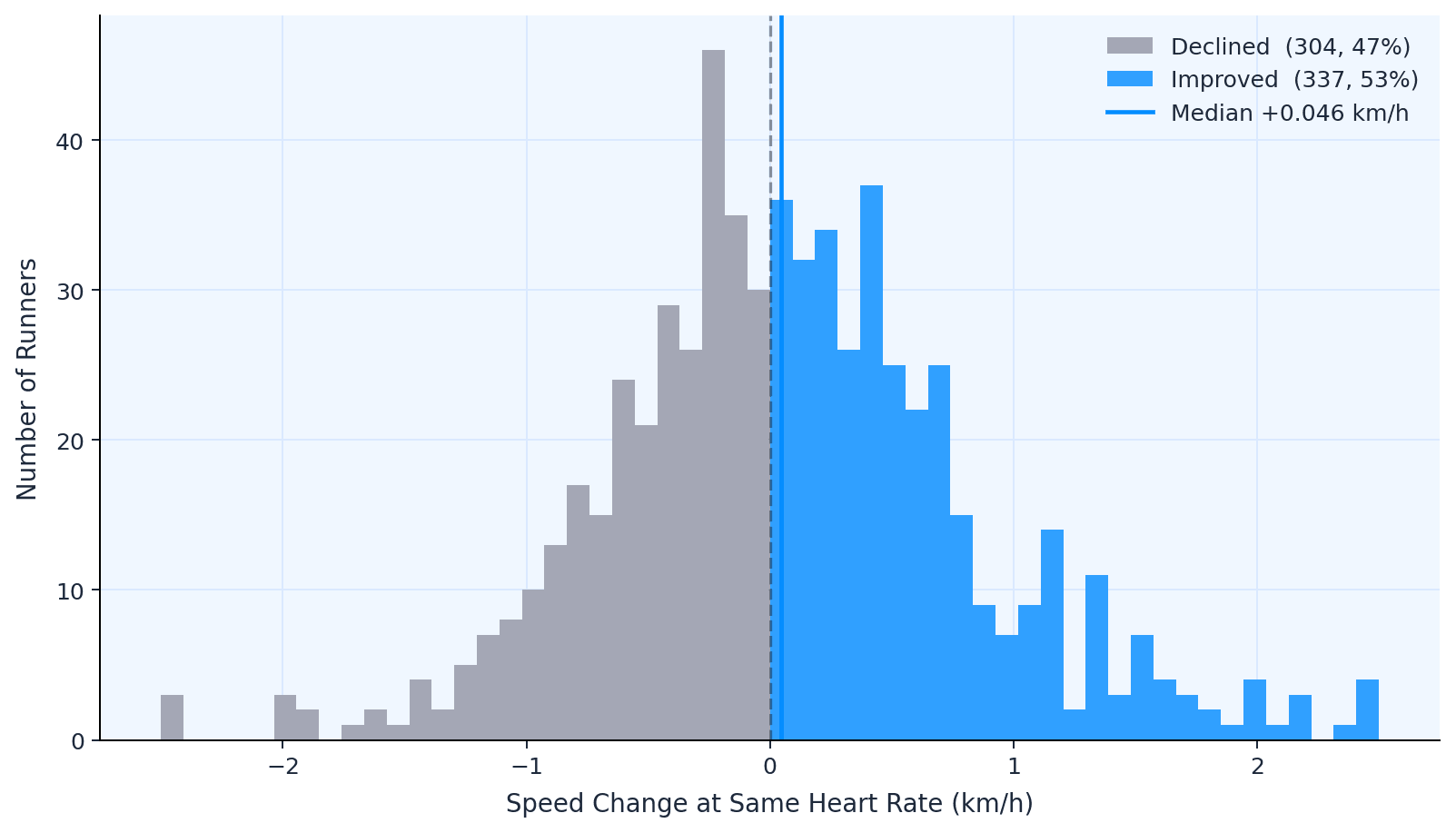

The median speed change at matched heart rates was +0.046 km/h. When I controlled for season, comparing the same calendar quarters year-on-year to remove the effect of summer fitness vs winter detraining, it dropped to +0.002 km/h. Roughly 50% of runners improved, 50% didn't.

This might look like a disappointing result. It's actually the opposite; it's the strongest evidence that the method is measuring something real.

If the speed-HR approach were picking up noise or systematic drift, device calibration shifting over time, seasonal artefacts, measurement error compounding, I'd expect a consistent bias in one direction. Everyone would appear to "improve" or "decline" together. Instead, I see an almost perfect 50/50 split with a median barely distinguishable from zero. That's exactly what a general population of recreational runners should look like. Most people maintain fitness. They don't dramatically gain or lose it.

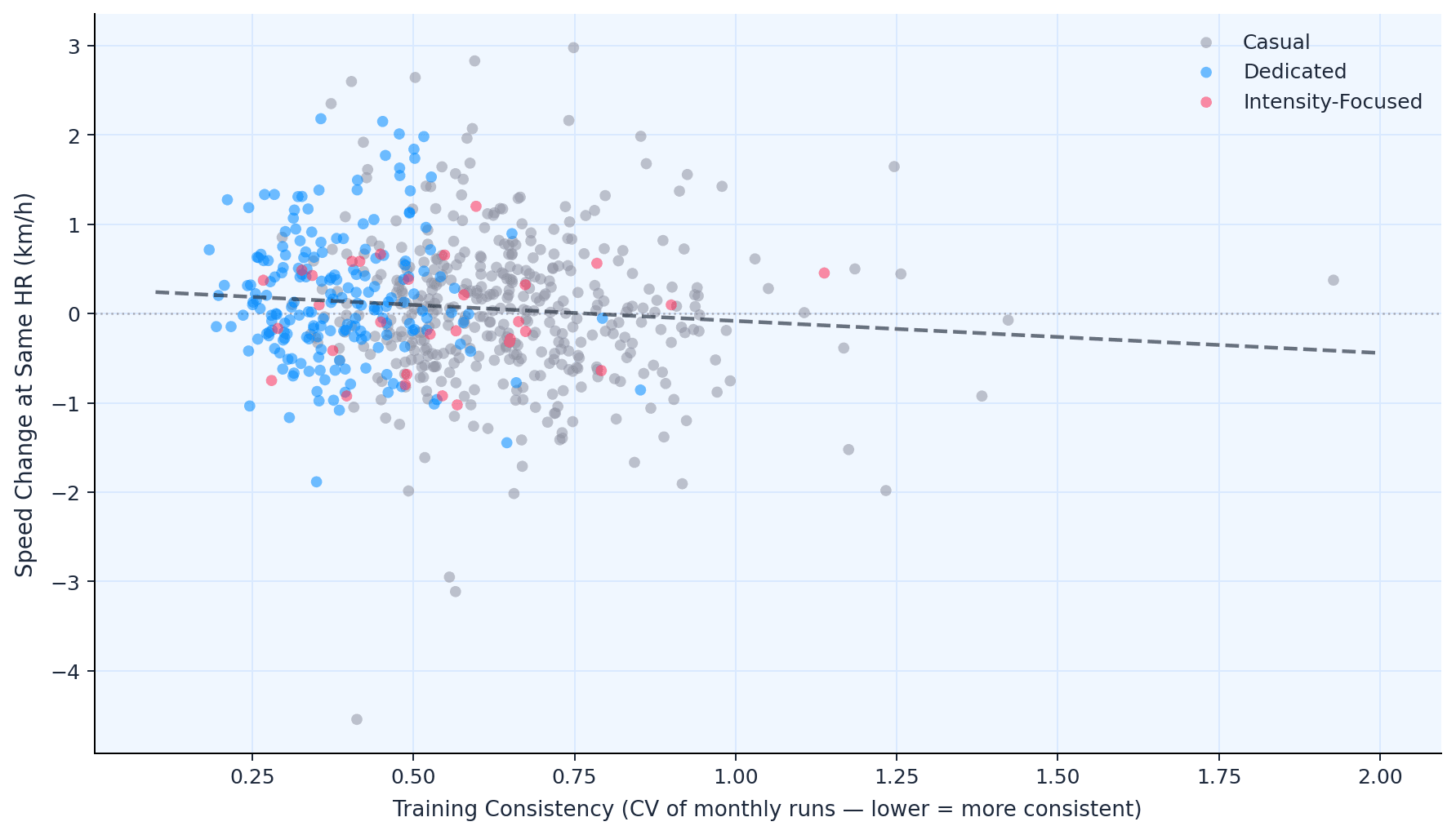

The validation comes in the next step. When I stratify by training behavior, the groups separate. Dedicated runners improve, Casual runners don't, Intensity-Focused runners trend negative. If this were just noise, slicing by phenotype wouldn't produce different outcomes.

The fact that it does, and in the physiologically expected direction, is what turns a flat average into a credible method.

- The individual variation is enormous

The interquartile range spans nearly 0.9 km/h (−0.39 to +0.50). Some runners improved by over 2 km/h at the same heart rate; others declined by the same margin. The population average is a poor summary of a dataset this spread. The real question isn't whether improvement happens — it's for whom.

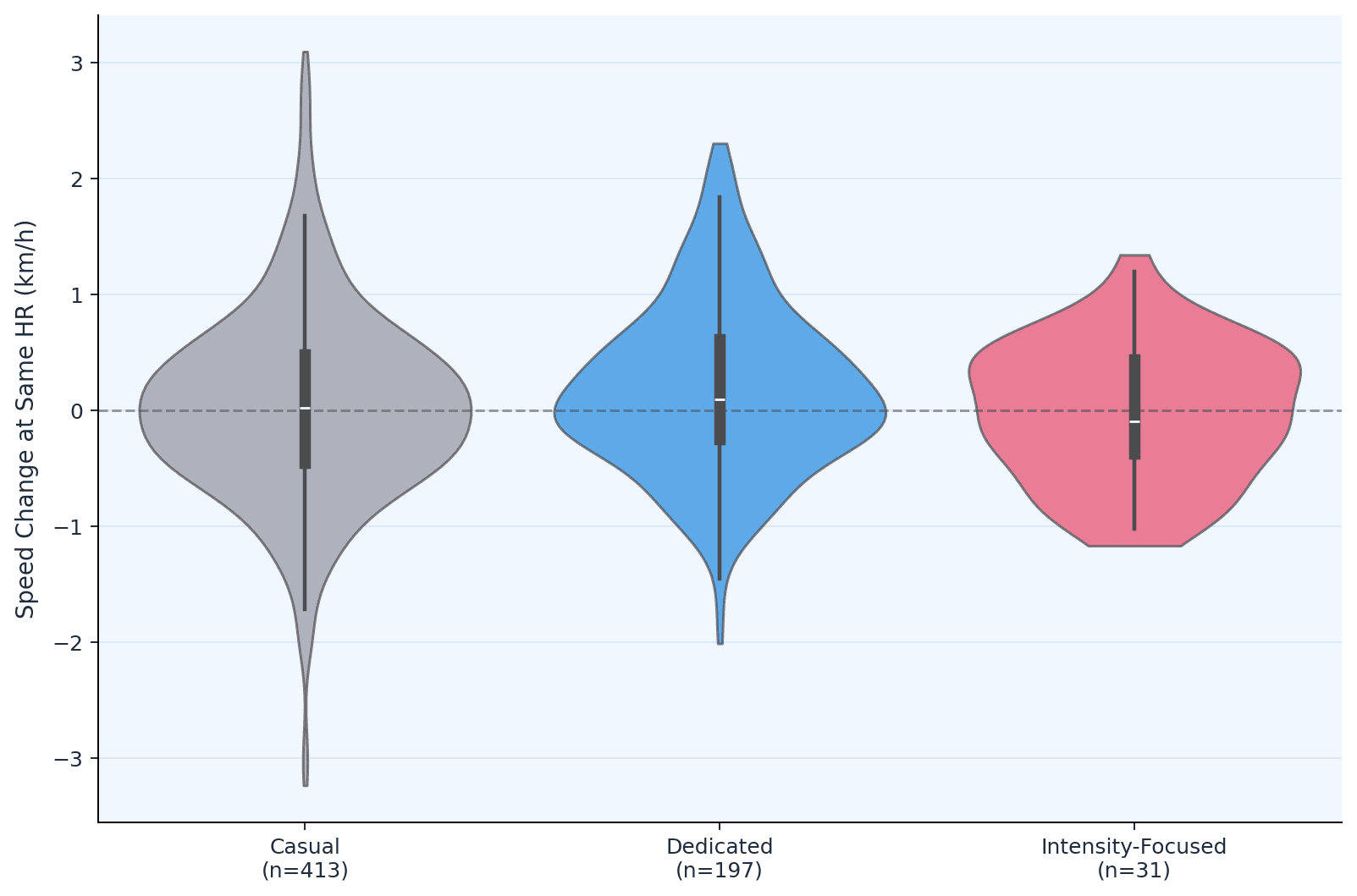

- Three distinct training phenotypes emerge from the data

I used k-means clustering on four training dimensions — monthly volume, distance, consistency, and intensity — to identify natural groupings. Three phenotypes emerged:

- Casual runners (n=413, 64%): ~4 runs per month, 30 km/month, high month-to-month variability (CV=0.63). The majority of the dataset.

- Dedicated runners (n=197, 31%): ~12 runs per month, 120 km/month, low variability (CV=0.37). Steady, committed, moderate-intensity.

- Intensity-Focused runners (n=31, 5%): ~9 runs per month, 63 km/month, but 74% HR reserve and 45% of sessions at high intensity. They push hard.

- Dedicated runners are the ones who improve

The Dedicated group showed a median speed-HR improvement of +0.100 km/h, with 56% improving. Significantly better than Casual runners (p=0.006, Mann-Whitney U). Their defining training feature isn't pace or intensity. It's reliability: 12 runs a month at moderate effort with minimal dropout.

To look at this another way, at a given heart rate, Dedicated runners were running 0.1 km/h faster by the end of the observation period than at the start — about 3–4 seconds per kilometer — without their heart working any harder.

- Intensity without volume trends negative

The Intensity-Focused group — who run at 74% HR reserve with nearly half their sessions at high intensity — showed a median decline of −0.090 km/h. There are two caveats. First, this is a small group (n=31), so I should hold the findings lightly. Second, I haven't formally controlled for volume in the phenotype comparison — the Intensity-Focused group differs from Dedicated runners on both intensity and total mileage simultaneously, so I can't cleanly isolate which factor drives the decline.

That said, there's a natural experiment embedded in the data that's worth noting. The Intensity-Focused group runs considerably more than the average Casual runner — 63 km per month versus 30 km — yet their speed-HR curve moves in the wrong direction. When I compared them to Casual runners running the same mileage, those low-intensity runners at equivalent volume improved by a median of +0.070 km/h; the Intensity-Focused group declined by −0.090 km/h. The direction is suggestive: it's not simply that they don't run enough. In the continuous variable analysis across all 641 users, high-intensity fraction showed a negative coefficient after controlling for volume, though the sample is too small for statistical confidence.

This aligns with what I found in my earlier marathon research, where athletes training with excessive time at threshold pace tended to run slower on race day, not faster. Two different methods, same direction. It's also coherent with established exercise physiology: high-intensity work improves anaerobic capacity and race-specific speed, but shifting the aerobic efficiency curve appears to require volume.

There is clearly a lot going on here, both with the physiology and other factors. I think it's a safe assumption that the high-intensity-focused runners are at the greatest injury risk, which would have a significant impact on the analysis.

- There's a clear dose-response for volume

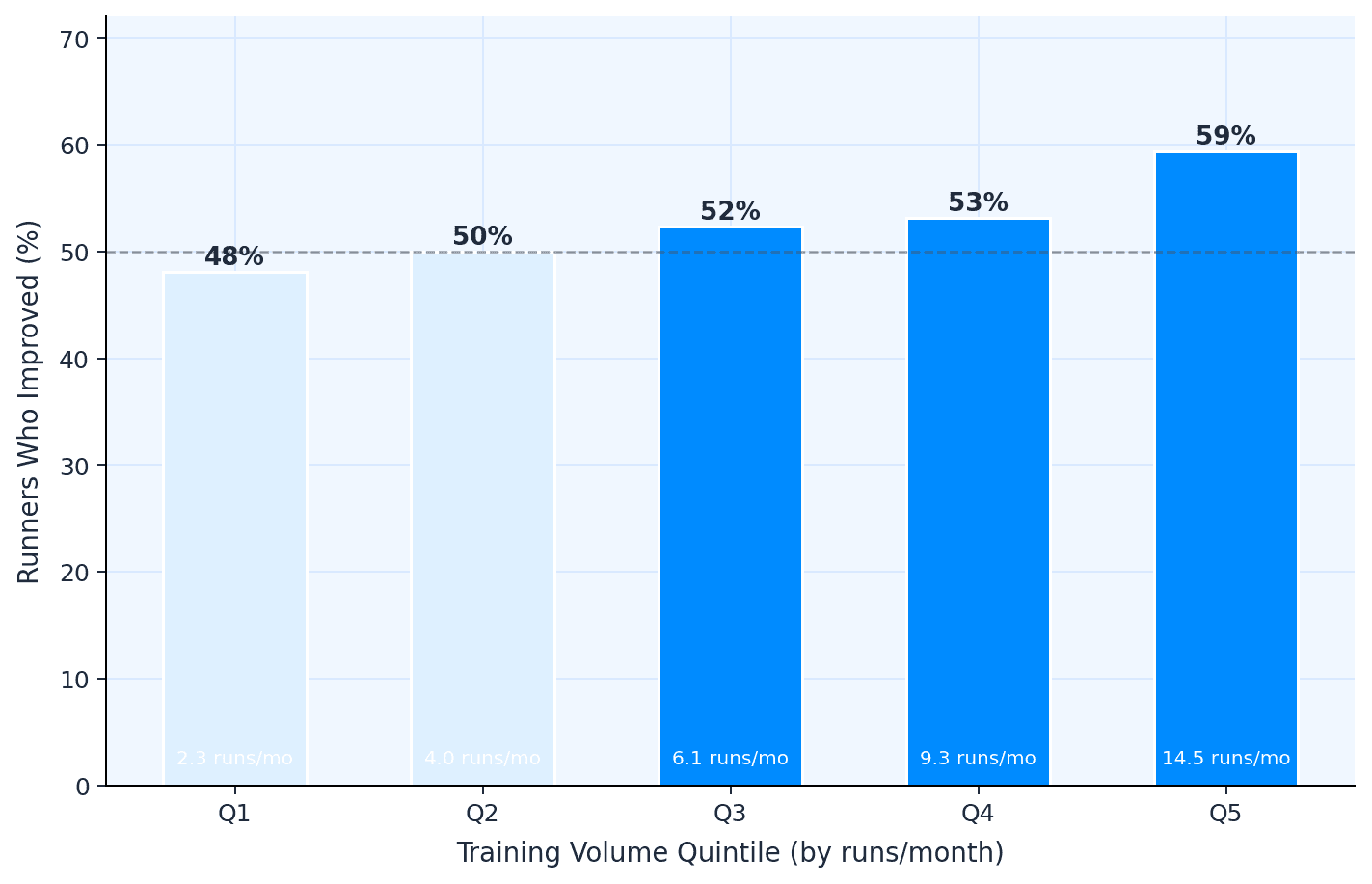

I split the 641 runners into five equal groups by monthly run frequency. The bottom quintile (2.3 runs/month) saw 47% improve. The top quintile (14.5 runs/month) saw 64% improve — a 17-percentage-point gap. The relationship is roughly linear across quintiles and statistically significant (r=0.097, p=0.014).

- Controlling for baseline fitness makes the training signal stronger, not weaker

Better runners have less room to improve — this is a well-known ceiling effect, and I needed to check whether it was confounding the phenotype comparison. Dedicated runners do start faster at baseline (10.7 km/h, around 5:36 per kilometer) compared to Casual runners (9.7 km/h, 6:09 per kilometer). The ceiling effect is real: baseline speed correlates negatively with improvement (r=−0.30, p<0.0001), meaning faster runners as a group improve less, as expected.

But Dedicated runners improve more despite this working against them. When I controlled for baseline speed, the volume and consistency effects didn't disappear — they roughly doubled:

| Variable | Raw r | Controlling for baseline |

|---|---|---|

| Runs per month | 0.090 | 0.188 (p<0.0001) |

| km per month | 0.080 | 0.212 (p<0.0001) |

| Consistency (CV) | −0.093 | −0.154 (p<0.0001) |

| Intensity fraction | −0.001 | 0.034 (p=0.39) |

The within-quartile comparison confirms this is about training behavior, not pre-existing fitness. Among runners in the same baseline speed band (9.1–10.1 km/h), Dedicated runners improved by a median of +0.230 km/h while Casual runners improved by just +0.032. In the next quartile up (10.1–11.0 km/h), Dedicated runners stayed positive (+0.046) while Casual runners declined (−0.214). The training behavior signal is present at every fitness level.

- Consistency predicts improvement at least as well as volume

Month-to-month variability in training — measured as the coefficient of variation in monthly run count — was one of the most robust predictors of speed-HR improvement, with a partial correlation of r=−0.154 (p<0.0001) after controlling for baseline fitness. Runners with low variability (CV below 0.4) clustered above zero; those with erratic patterns (CV above 0.7) trended negative.

In plain terms, it's not just how much you run. It's whether you keep showing up. It is the single most durable finding in this analysis.

- Intensity alone doesn't predict improvement

Neither before nor after controlling for baseline fitness does high-intensity session fraction predict speed-HR improvement (raw r=−0.001, p=0.98; partial r=0.034, p=0.39). The signal in this dataset is in volume and consistency, not in how hard individual sessions are. This doesn't mean intensity is useless — it almost certainly matters for race-specific fitness and anaerobic capacity — but for shifting the aerobic efficiency curve, the data points elsewhere.

- This holds up no matter how you slice the data

I tested five different temporal splits: halves, thirds, quarters, fifths, and tenths. Each compares a progressively narrower early window to a progressively narrower late window.

| Split | N users | Median shift | Consistency r (p) | Q5 vs Q1 improved |

|---|---|---|---|---|

| Halves | 641 | +0.024 | −0.123 (0.002) | 63% vs 47% |

| Thirds | 641 | +0.042 | −0.121 (0.002) | 64% vs 47% |

| Quarters | 641 | +0.013 | −0.108 (0.006) | 60% vs 44% |

| Fifths | 638 | +0.029 | −0.087 (0.027) | 56% vs 45% |

| Tenths | 584 | +0.014 | −0.089 (0.032) | 57% vs 47% |

Thirds produced the highest signal-to-noise ratio, but the story is identical across all five: consistency predicts improvement in every split. The Dedicated phenotype always improves most. The Q5-vs-Q1 dose-response always holds. As windows narrow, noise increases — fewer runs per window means less stable curve estimates — but the direction never changes.

This is the most methodologically reassuring finding in the analysis. The conclusions aren't an artefact of how I chose to divide the data.

What This Means For Runners, Coaches, and The Wearable Industry

For runners: The data makes a case for the oldest advice in the sport. Don't do anything dramatic. Don't chase every session at threshold. Build a routine you can sustain month after month, and the aerobic system will respond — slowly, quietly, measurably. The Dedicated phenotype's median improvement of +0.100 km/h translates to roughly 3.5 seconds per kilometer. Over a marathon, that's about two and a half minutes. Not from a training camp or a magic session — from simply not stopping.

For the wearable industry: Speed-HR curves derived from consumer devices can detect real physiological adaptation at population scale. The method is crude compared to laboratory testing, but at n=641 with longitudinal data, the signal is there. Spathis et al. (2022) showed that free-living wearable data can predict VO₂max across 11,000 participants with r=0.82 — but required chest-worn accelerometers and deep learning infrastructure. The signal I've found here is less precise but requires only the pace and heart rate data already present on any GPS watch, which makes it far more scalable in practice. I am currently exploring how to incorporate this methodology into a live fitness tracking model, available directly via the Terra dashboard.

For coaches: The consistency finding deserves attention. In a dataset where I tested five independent temporal splits, month-to-month training variability was the only variable that predicted improvement in every single one. Volume mattered, but only robustly in the wider splits. Consistency — the boring, unsexy, "just keep showing up" variable — seems bulletproof.

Limitations of Wearable Heart Rate Data

This is observational data. Fitter people may choose to run more, rather than running more making them fitter. I can't separate cause from effect.

Heart rate data from wrist-based optical sensors has known limitations, particularly at higher intensities where motion artefact increases. I cleaned aggressively, but some noise will remain.

I'm assuming runners perform broadly similar types of runs throughout the observation period. In reality, someone might shift from road to trail, or begin training on hillier routes — both of which would affect the HR-pace relationship independently of any fitness change. With session-level averages, I can't detect this.

"Early" versus "late" periods are defined by each user's own timeline, not a fixed calendar window. The median span is 3.5 years, but individual windows vary from 6 months to over 4 years.

The Intensity-Focused phenotype (n=31) is too small for confident conclusions. The negative trend is suggestive, not definitive.

I measured a proxy for aerobic efficiency only, one dimension of fitness. Speed work, hill strength, race tactics, and mental resilience don't show up in a speed-HR curve. A runner who "declined" by this metric may have improved in ways this method can't capture.

Methodology: Speed-Heart Rate Curves and Exercise Physiology

Our dataset consists of 856,000 running activities. These activities were filtered for valid speed and heart-rate ranges, then narrowed to a longitudinal cohort with sufficient training history for matched speed–heart rate comparisons across early and late training periods.

For the Speed-HR methodology we used heart rate binned in 5 bpm windows. Median speed computed per bin in each period. Speed change = median of (late speed – early speed) across matched bins. Season-controlled replication compared the same calendar quarters across different years.

Phenotyping: K-means clustering (k=3) on standardized features: runs/month, km/month, monthly CV, and, where available, we used median HR reserve fraction and high-intensity session proportion. Clusters validated by silhouette analysis and manual inspection of training profiles.

Lastly, for the robustness: all core findings replicated across five temporal splits (halves through tenths). Thirds selected as primary analysis based on peak signal-to-noise ratio. Cross-split directional agreement ranged from 79% (thirds vs tenths) to 89% (thirds vs quarters).

References

- Schimpchen J, Freitas Correia P, Meyer T. Minimally invasive ways to monitor changes in cardiocirculatory fitness in running-based sports: a systematic review. International Journal of Sports Medicine. 2023;44(2):95–107. doi: 10.1055/a-1925-7468

- Buchheit M. Monitoring training status with HR measures: do all roads lead to Rome? Frontiers in Physiology. 2014;5:73. doi: 10.3389/fphys.2014.00073

- Spathis D, Perez-Pozuelo I, Gonzales TI, Wu Y, Brage S, Wareham N, Mascolo C. Longitudinal cardio-respiratory fitness prediction through wearables in free-living environments. NPJ Digital Medicine. 2022;5:176. doi: 10.1038/s41746-022-00719-1

Continue reading

The Data Behind the London Marathon

While Sabastian Sawe was busy breaking the two-hour marathon barrier in London, the watches of 571 amateur runners told a quieter but equally fascinating story. GPS devices over-measured the course by 527 metres on average, faster runners ran with higher heart rates than the slower ones did, and Coros watches reported 47% more calories per kilometre than physiology actually supports.

April 27, 2026

Tube Strikes Made Londoners Active

During the April 2026 Tube strikes, Londoners adapted by cycling more—activity tripled, e-bike commutes rose 13%, and 19% of cyclists were entirely new to riding. Data analysis of 1,566 weekday rides revealed e-bike share jumped from 54.9% to 67.5%, with commutes often staying local. The strikes disrupted transit but highlighted Londoners' resilience in finding alternative ways to move.

April 24, 2026

Running Is The Hardest Endurance Sport? Not so Fast

Which sport is hardest on the heart? We ran the question through hundreds of thousands of sessions across nine activity types. Running dominates minute-for-minute. It has the highest average, highest peak, highest sustained intensity. But its shorter sessions mean total cardiovascular load per outing often falls behind skiing or hiking. The shape of the heart rate curve tells a different story for every sport, and the weighting method you pick changes the answer.

April 23, 2026

Cold Plunging Might Make Your Biomarkers Worse

We analyzed large-scale wearable data to understand what cold exposure actually does to your body. Sporadic plunges act as a stressor, raising sleeping heart rate. But with consistent use, around 3 sessions every 14 days, the effect flips, improving recovery and sleep scores. We also found that women's responses differ significantly by menstrual cycle phase.

April 14, 2026